On March 31, 2026, the AI development community witnessed an unprecedented event: the claude code leak npm vulnerability. In what is being described as a masterclass in CI/CD pipeline fragility, a simple packaging oversight exposed over 512,000 lines of Anthropic’s proprietary TypeScript code. For builders, developers, and AI researchers, this wasn’t just a dramatic news cycle—it provided the first complete, unobfuscated blueprint of a production-grade, multi-agent AI system currently generating billions in enterprise value.

If you are building your own autonomous systems, this incident serves as a massive open-source textbook. Let’s break down exactly what happened, the hidden features exposed within the source code, and how Anthropic orchestrates its multi-agent environments.

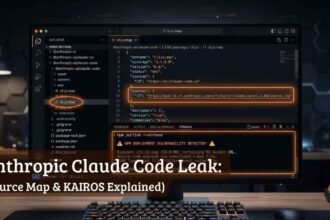

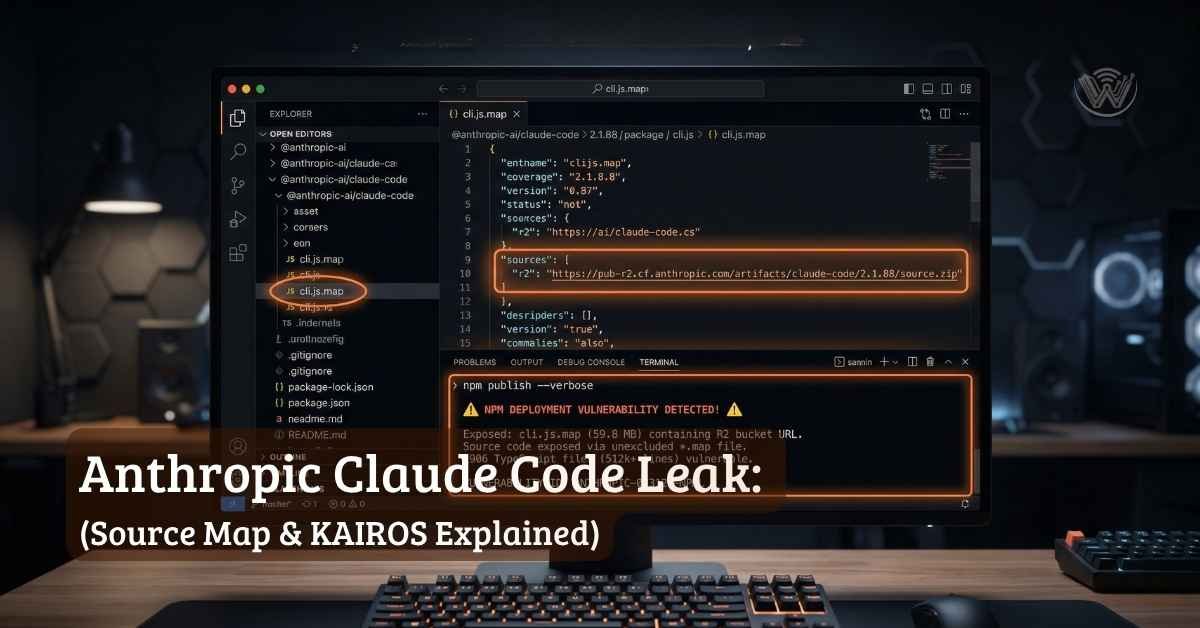

The Anatomy of the claude code leak npm: How a Single .map File Exposed 512,000 Lines?

The root cause of the leak is surprisingly mundane and serves as a critical warning for every full-stack developer managing a build pipeline. It came down to a single omitted exclusion in an .npmignore file.

Anthropic utilizes Bun as their bundler for Claude Code, which generates source maps by default unless explicitly configured otherwise. When version 2.1.88 of the @anthropic-ai/claude-code package was pushed to the public npm registry, it shipped alongside a massive 59.8 MB cli.js.map file.

The fatal flaw: Shipping production maps Source maps are designed strictly for debugging—they map minified production code back to its original, readable source.

This specific source map contained a reference to a ZIP archive hosted on an open Cloudflare R2 storage bucket. Anyone who downloaded and decompressed the archive was handed roughly 1,900 pristine TypeScript files. Within hours, the codebase was mirrored across GitHub and forked over 40,000 times before DMCA notices could catch up.

Inside the Architecture: What the Code Reveals About Agentic AI

Beyond the shock of the leak, the TypeScript files revealed exactly how Anthropic solves complex orchestration challenges. Just like building a complex website with WordPress and Elementor Pro, the real magic of a robust AI application happens in the backend architecture.

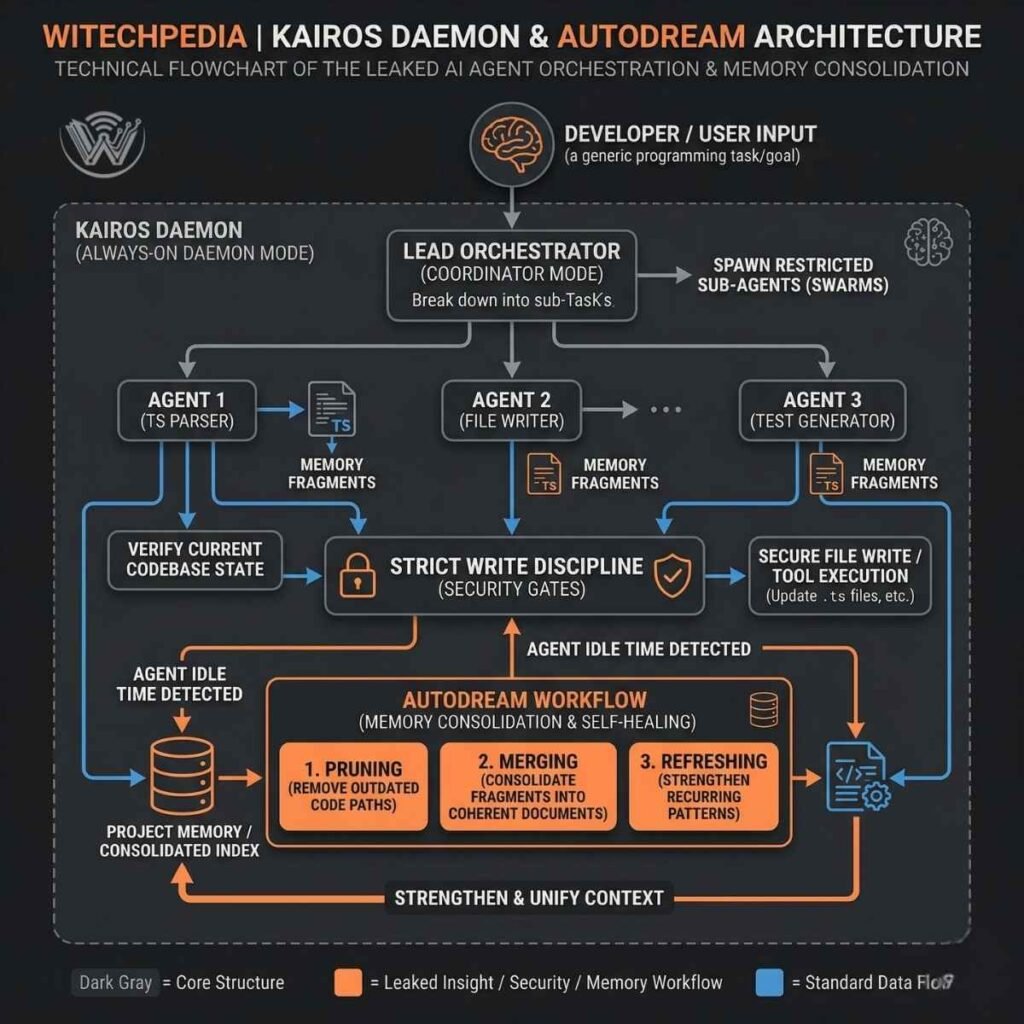

The KAIROS Daemon & autoDream Memory Consolidation

Perhaps the most fascinating discovery in the codebase is a heavily referenced, unreleased daemon mode codenamed KAIROS (named after the Greek concept of “the right time”). KAIROS allows Claude Code to operate as an always-on background agent.

To prevent the AI from suffering “context entropy”—getting confused and bogged down during long, multi-session workflows—Anthropic built a background memory consolidation system called autoDream. Functioning much like human sleep, autoDream runs during idle time to process memory files. It performs three core tasks:

- Pruning: Removing outdated or stale code paths that no longer exist.

- Merging: Combining fragmented notes about the project’s architecture into a single coherent document.

- Refreshing: Strengthening recurring coding patterns.

Multi-Agent Workflows: Anthropic vs. Open-Source Frameworks

The leak exposed Claude’s “Coordinator Mode,” giving developers a masterclass in multi-agent orchestration. The system relies on a lead orchestrator agent that breaks down user goals and spawns parallel, restricted sub-agents to execute tasks.

For developers who have followed our internal guides on building AI agents with CrewAI, the similarities and differences are striking. While open-source frameworks often grant broad access to tools, Claude enforces a “Strict Write Discipline.” Sub-agents treat their own memory as mere “hints” rather than facts, verifying the current state of the codebase before executing any write commands. Every action is evaluated by an internal classifier and labeled LOW, MEDIUM, or HIGH risk, silently auto-approving safe tool permissions to reduce user prompt fatigue.

Hidden Features: “Undercover Mode” and The Tamagotchi Easter Egg

Engineers digging through the 512,000 lines of code also found a few surprising hidden features locked behind compile-time feature flags:

- Undercover Mode: A feature designed specifically for Anthropic employees contributing to public open-source projects. When activated, a script (

undercover.ts) forcibly strips any AI attribution or internal Anthropic metadata from GitHub commit messages to prevent “blowing their cover.” - The “Buddy” System: A fully functional, Tamagotchi-style pet simulator hidden inside the terminal interface. Complete with procedural stat generation, shiny variants, and rarity tiers. Hilariously, the word “duck” had to be hex-encoded (

String.fromCharCode(0x64,0x75,0x63,0x6b)) in the code because it triggered false positives in Anthropic’s internal CI pipeline, which scans for leaked internal model codenames

Security Impact: Are User Prompts or API Keys Safe?

If you are currently utilizing the Claude API or the Claude Code CLI, the immediate question is: Is my data safe?

Anthropic has officially confirmed that no customer data, user prompts, API keys, or enterprise credentials were exposed. The leak was strictly limited to the source code of the CLI application itself.

However, a severe secondary supply-chain threat emerged immediately. Within 24 hours of the exposure, malicious actors flooded the npm registry with trojanized packages mimicking dependencies found in the leaked source. Developers attempting to compile the leaked codebase locally were targeted with compromised versions of packages (such as axios) containing Remote Access Trojans (RATs).

Best Practice: Always verify your packages, utilize strict lockfiles, and ensure your .npmignore correctly filters out debugging maps.

Where to Find the Code (And Why You Shouldn’t Download the Original)

If you are looking for a direct download of the original 59.8 MB Anthropic .map file, you need to proceed with extreme caution. Anthropic is actively issuing aggressive DMCA takedowns across GitHub to remove direct mirrors of their proprietary code.

More importantly, a severe supply-chain threat has emerged. Malicious actors are flooding developer forums with fake “Claude Code Source” zip files and trojanized npm packages that contain hidden malware and Remote Access Trojans (RATs). Do not download unverified zip files claiming to be the Anthropic leak.

The Safe Alternative: The “Claw-Code” Open-Source Port

Instead of risking legal trouble or malware, the developer community has already built a legal alternative.

Within hours of the leak, a developer under the handle instructkr published claw-code, a clean-room Python rewrite that captures the exact architectural patterns of Claude’s agent harness without copying any of the proprietary TypeScript. It became the fastest-growing repository in GitHub history, crossing 100,000 stars in a single day.

- View the Architecture: You can study the safe, open-source Python port of the architecture on GitHub here: Claw-Code Repository (Note: search “claw-code” on GitHub for the latest active mirror).

- Official Tool: If you just want to use the official AI agent, download it securely via Anthropic’s native installer rather than the npm registry.

Download: WiTechPedia Claude Code Architecture Study Guide

While we strongly advise against downloading or hosting the illegal leaked source code due to malware and legal risks, we understand that developers want to study the architectural breakthroughs it revealed.

To help you analyze these multi-agent patterns safely, we have compiled a comprehensive technical guide based only on the public community analysis of the leak’s structure.

What you are downloading:

- A formatted breakdown of the KAIROS daemon’s proposed functionality.

- A visual flow diagram of the

autoDreammemory consolidation process. - An analysis of the multi-agent orchestration patterns used in “Coordinator Mode.”

This guide does not contain any of Anthropic’s proprietary code.

FREE Download

Frequently Asked Questions (FAQ)

Was Anthropic hacked?

No. The leak was not the result of a cyberattack. It was a release packaging error caused by human oversight when configuring the bundler’s source map generation settings.

Is it safe to use the Claude Code CLI right now?

Yes. However, Anthropic advises users to ensure they are using the native installer rather than the npm version, and to actively scan their environments if they downloaded unofficial npm packages on March 31, 2026.

What is the Claude KAIROS daemon?

KAIROS is an unreleased, always-on background mode discovered in the leaked source code. It allows the AI to act proactively, consolidate memory during idle times via the autoDream function, and maintain persistent project context without manual user prompting.

How does this affect the future of AI development?

The exposure of Claude’s source code provides an unprecedented look at how top-tier companies handle context entropy, multi-agent coordination, and failure states. It is expected to rapidly accelerate the capabilities of open-source agent frameworks as developers adopt Anthropic’s proven architectural patterns.